Model trends: Championship, 2016/17

With the regular Championship season over, I thought that I should update the graphics which track my model predictions to see how each team performed relative to its predictions.

Explanation

The way it works is pretty simple: for pre-season and after every round of games it shows the results of my simulations for a specific club in terms of both:

- How their average predicted final league position has changed (the solid line in the top chart)

- How their predicted probability of ending up in each section of the table has changed (the coloured columns in the bottom chart)

The point of this is to show how the model’s assessment of a club’s prospects has changed after each round of games, but I also wanted some idea of how good a predictor it is. I’ve therefore added (as a dashed line) each club’s actual league position and briefly assessed (under each graphic) how well it’s predicted this so far.

I’m not expecting a 100% accuracy rate for a variety of reasons, including:

- As Leicester recently reminded us, football is notoriously difficult to predict and the strongest team doesn’t always win

- The rating system that drives the predictions can take a little while to adjust to sudden changes (e.g. a big tactical shift, replacing the manager or a lot of transfer activity)

- While I’m pretty happy with the rating system and model, there’s limited data available in the lower leagues and therefore it may miss some subtleties in the way certain teams perform

Anyway, onto the graphics. There’s one for every team along with a brief summary and my view of how well the model’s predicted their fortunes.

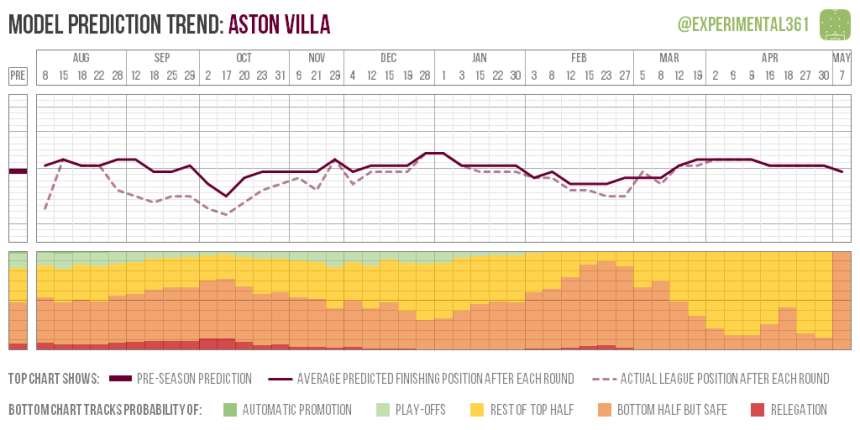

Villa have been a mid-table side all season in the model’s eyes, thanks to the dismal way they exited the Premier League being factored into the pre-season assessment of 13th (which is where they ended up). During their poor start (the dipping dashed line) performances weren’t terrible so the predictions didn’t alter all that much and their recent resurgence has brought things back pretty much full circle.

Villa have been a mid-table side all season in the model’s eyes, thanks to the dismal way they exited the Premier League being factored into the pre-season assessment of 13th (which is where they ended up). During their poor start (the dipping dashed line) performances weren’t terrible so the predictions didn’t alter all that much and their recent resurgence has brought things back pretty much full circle.

Model performance: Pre-season prediction was bang on

Barnsley were expected to finish in lower mid-table at the start of the season (not unusual for a side promoted via the play-offs) and the model hasn’t been all that impressed with their performances this season. In late November it looked as though their bubble might have burst, but a successful festive period kept them in the top half of the table until reality started to bite during the run-in.

Barnsley were expected to finish in lower mid-table at the start of the season (not unusual for a side promoted via the play-offs) and the model hasn’t been all that impressed with their performances this season. In late November it looked as though their bubble might have burst, but a successful festive period kept them in the top half of the table until reality started to bite during the run-in.

Model performance: Too pessimistic until the halfway point

Gary Rowett consistently defied the model during his spell at Birmingham so I’ll be interested to see if he can repeat the trick with a different set of players at Derby. His sacking from Birmingham triggered an eye-watering collapse in results that ended up making the model look prescient, although we obviously can’t be sure that wouldn’t have happened anyway.

Model performance: Predictions of a relegation battle weren’t that far off

The model correctly predicted Blackburn’s difficult season after their disappointing finish to the previous campaign and unfortunately there’s not been much evidence to sway it. They were barely out of the bottom three all season and at no point did the model think that they might escape.

Model performance: Correctly foresaw a horrible season

Brentford performed well last season – particularly up front – and were expected by the model to be an outside challenger for the play-offs this time around. However the defence hasn’t been anywhere near as impressive and a poor run saw their promotion prospects start to evaporate in November. A top half finish never looked in doubt though.

Brentford performed well last season – particularly up front – and were expected by the model to be an outside challenger for the play-offs this time around. However the defence hasn’t been anywhere near as impressive and a poor run saw their promotion prospects start to evaporate in November. A top half finish never looked in doubt though.

Model performance: Slightly too optimistic at first, but predicted their late revival

Brighton finished strongly last season and were an automatic promotion favourite right from the start. A bumpy beginning wasn’t sufficient to shake the model’s faith – they were constantly predicted to secure a top two finish – and since late October even finishing outside the top six looked almost unthinkable.

Model performance: Pre-season prediction was spot on

Having finished last season in relatively good shape, the model was expecting a solid mid-table finish from the Robins this time around. However after a bright start that seemed to bear this out, the wheels started to fall off in November and the slide took a long time to arrest. Strangely the balance of chances created remained relatively healthy during this bad run, so it’s tempting to conclude that the problem is psychological.

Model performance: Didn’t see their sustained collapse coming

Burton looked sufficiently convincing in gaining promotion last season that the model expected them to steer clear of the relegation scrap. As the season went on, those margins became finer thanks to some wasteful finishing and poor chance creation generally, but the big call predicting their survival was eventually shown to be the right one.

Model performance: A bit too optimistic, but got the big question right

Cardiff’s strong finish to last season saw the model peg them for a top half finish this time around, but the extent of their poor start was sufficient to deflate its pre-season prediction. However it remained consistently north of the Bluebirds’ league position until Neil Warnock’s improvements started to bear fruit.

Model performance: Correct to retain optimism, although swayed too much by their poor start

Many were expecting a strong campaign from Derby but some hilariously wasteful finishing saw the model quickly revise its expectations downward. Recovering to challenge for the play-offs was always going to be a big ask and the change of manager hasn’t yet yielded sufficient improvement.

Model performance: Too optimistic in pre-season, but quickly adjusted after their poor start

Fulham’s attack alone was sufficiently impressive to suggest a play-off challenge in pre-season and an improved defence has made them a force to be reckoned with. The model has been consistently optimistic about their play-off chances this season, even when they were hovering in mid-table.

Model performance: Justifiably optimistic from the beginning

Huddersfield look to have underachieved last season based on some encouraging performances, so the model tipped them as play-off outsiders this time around. The Terriers have exceeded even those expectations although some relatively modest showings overall suggest that they may have overachieved slightly.

Model performance: Correct to be optimistic, but didn’t go far enough

Ipswich’s ongoing shortcomings in attack led the model to predict a difficult season and a brush with relegation, but they’ve maintained a comfortable distance from the drop zone. Performances have remained sluggish so it took until March to revise its assessment upwards, although its predictions will be pessimistic again next season.

Model performance: Too pessimistic for much of the season

Garry Monk has exceeded all expectations this season, including those of the model which still rates Leeds as a mid-table side rather than a play-off challenger. A poor run-in saw them drop out of the play-offs, but it’s too soon to tell whether that was a blip or the bubble bursting.

Model performance: Too pessimistic and ongoing scepticism is yet to be justified

It wasn’t exactly controversial to make Newcastle favourites for the title, but the model was particularly bullish given how much their performances were already improving towards the end of last season. Even a shaky start wasn’t sufficient to move their predicted finish below second place and they’ve looked nailed on to go up since November.

Model performance: Correctly called their title win

Even when they were riding high earlier in the season, the model thought that the play-offs were a more realistic goal for Norwich than automatic promotion. The Canaries’ poor run thereafter saw them drop as low as 12th in the table but they always looked likely to recover some of that ground, even though the play-offs looked unreachable from March onwards.

Model performance: Not far off, although a little optimistic to begin with

Forest were expected to finish in lower mid-table after a bumpy end to last season but the model had no way of anticipating the chaos that has unfolded off the pitch. It had adjusted by October and retained a pessimistic outlook thereafter, although their recent performances convey plenty of optimism for next season.

Model performance: Adjusted relatively quickly to problems behind the scenes

A solid return to the Championship last season was expected to continue and a mid-table prediction held relatively firm despite a shaky start. As the season wore on a top half finish looked increasingly likely, although it looked to be the most probable outcome as early as October.

Model performance: Pre-season prediction ended up being pretty close

The model expected a season of consolidation for QPR and maintained that they’d end up in lower mid-table despite a bright start. At the turn of the year the possibility of relegation reared its head briefly, but Ian Holloway has defied expectations and turned their fortunes around.

Model performance: Pretty close and only briefly swayed by lurches in fortune

There are always a few clubs that end up surprising the model in a given season – football is a relatively difficult sport to predict – but few have ever done so to the same extent as Reading. Expected to struggle against relegation and not doing anything to suggest otherwise on paper, they’ve continued to get results in defiance of what their performance data suggests. They’ve either ridden a lot of luck (for which there is some evidence, e.g. an unusually high number of penalties awarded) or they’re doing something that the data isn’t able to measure (e.g. they may be far better organised than the average team).

Model performance: Didn’t see this coming and is still in denial

Neil Warnock’s rescue act at Rotherham last season was achieved without improving the Millers’ poor underlying performances all that much and so the model was very down on them from the beginning. Unfortunately it’s been proved painfully correct, with relegation having looked a near-certainty as early as October and nailed on since late November.

Model performance: Unfortunately bang on

The model really likes Sheffield Wednesday and has had them pegged for a play-off finish all season (even during their slow start). Their performances have continued to improve overall from an already-impressive base, although they haven’t always gotten the requisite rewards.

Model performance: Pre-season prediction – and ongoing optimism – ended up being correct

Wigan’s strong showing in getting promoted made the model optimistic that they could avoid a relegation battle, but their early season performances saw that quickly revised downwards. Frustratingly the Latics’ recent performances have been better but results haven’t done likewise and a return to League 1 looked all but inevitable since early March.

Model performance: Adjusted quickly from overly optimistic pre-season prediction

The model’s had a soft spot for Wolves all season and I still maintain that they should have given Walter Zenga more time based on how promisingly he’d started. However Paul Lambert has definitely improved them – albeit without getting the results to go with their performances – and the optimistic assessments have gone unfulfilled (for now at least).

The model’s had a soft spot for Wolves all season and I still maintain that they should have given Walter Zenga more time based on how promisingly he’d started. However Paul Lambert has definitely improved them – albeit without getting the results to go with their performances – and the optimistic assessments have gone unfulfilled (for now at least).

Model performance: Ongoing optimism hasn’t been justified

You must be logged in to post a comment.